Every generation has a version of this anxiety. The calculator will ruin our ability to do arithmetic. The word processor will make us lazy writers. Print will destroy memory. Before that, writing itself — Socrates worried that it would hollow out the mind, that a text couldn’t answer back, couldn’t respond to questions, couldn’t really know anything. He wasn’t wrong about any of it. He was wrong about what it meant.

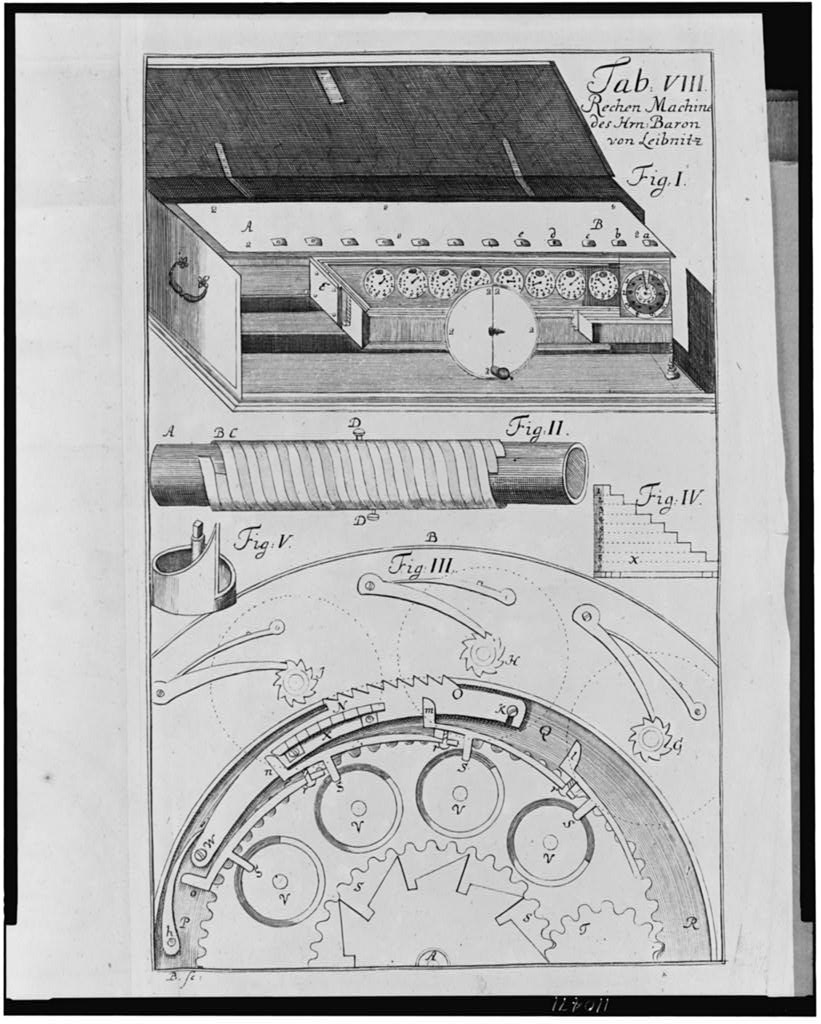

The history of human thought is largely a history of offloading. We did not develop civilization by becoming smarter in some raw biological sense. We developed it by building better external scaffolding. Writing gave us the ability to store and transmit knowledge beyond the span of a single life. The printing press distributed it. Cheap paper — and this is underrated — gave people a disposable surface to think on. The mathematics of the 17th and 18th centuries was probably not made possible by better brains. It was made possible by paper that cost almost nothing, so that a mathematician could fill sheets with false starts, scribble in margins, follow a line of reasoning that turned out to be wrong without that failure being precious. The scratch pad is a cognitive technology. Waste is a feature.

This semester I’m taking a seminar at Columbia called “Wittgenstein in the Machine,” and one idea has lodged itself in a way I can’t shake. In the Blue Book, Wittgenstein diagnoses what he calls a “mental cramp”: we ask “what is meaning?” and expect to find a solid object, a logical essence, something to point at. But meaning isn’t a thing. It’s a performance. Thinking, he writes, is simply “the activity of operating with signs” — and that activity can be performed by the hand or the mouth as much as the mind. The feel of a word, the sense that we mean it, is not the source of its meaning. It’s a byproduct. Mental experience is downstream of the linguistic role, not upstream. Consciousness, on this account, is less the engine of thought than its exhaust.

The implication that took me longest to accept: what’s “really relevant,” Wittgenstein writes, is that the contrasts between signs exist in the system of language — and “they need not in any sense be present in his mind when he utters his sentence.” Intelligence isn’t a property of the player. It’s a property of the game. This cuts directly against something I hadn’t noticed I assumed — something most of our AI discourse assumes too: that intelligence lives in brains, and that building AI means reverse-engineering it from the outside. Wittgenstein would say that gets it backwards. It was never in the brain alone. It was in the language, the system, the signs. Machine learning isn’t reverse-engineering the mind. It’s joining a game that was always bigger than any one player.

What this means is that the tools were never merely instrumental. They were constitutive. The mathematician with paper is not the same cognitive entity as the mathematician without it. The scholar who can search a text is not just faster — she thinks differently, asks different questions, follows different threads. The boundary between the thinker and the apparatus was always blurry. We just didn’t notice because the apparatus was quiet.

LLMs are loud. They generate text, which we associate with thought. They answer questions, which we associate with understanding. So the anxiety is louder too. We want to know: what’s left for the human? What’s mine?

But the question misunderstands what “mine” ever meant. When I work through an argument by writing it down, the sentences on the page are doing cognitive work I cannot fully do in my head. They hold things I would otherwise drop. They push back, in a way — the sentence that doesn’t cohere on paper didn’t cohere in my thinking either, I just couldn’t see it. The page is a collaborator. The claim that I “did the thinking” is true only in a loose sense, the same loose sense in which a writer “found the word” when the word was already there in the language, waiting.

The self that was supposedly at risk from AI was already more distributed than we imagined. It was porous, if you will. It was always partly in the tools, the notebooks, the libraries, the language itself — that vast inherited structure we did not make and cannot fully see. What we call intelligence has always been a collaboration between a brain and its scaffolding. The scaffolding got more sophisticated. That’s it.

This isn’t calming in the way “don’t worry about it” is calming. It’s calming the way a true description is calming. The thing you feared losing — some kernel of pure, unmediated, self-sufficient mind — was never quite there to begin with.

What was there, and still is, is something more interesting: a self that has always thought by reaching outward, that has always been partly made by its tools.

We were never the ghost. We were always the machine.